Art Maslow

Founder of Foxtery

Organizations are adopting AI at record rates, yet only 12% of CEOs report actual cost or revenue benefits. This productivity paradox creates an urgent mandate for L&D professionals: traditional role-based training that teaches "how to use tools" is failing.

The ground is shifting. 61% of employees expect their job role will change significantly in 2026 due to emerging technologies. Training employees for static job descriptions no longer works when roles themselves are in constant flux.

This guide explores where role-based training goes wrong and how to realign it with real business needs. You'll discover a four-pillar framework that transforms role-based training from static job task lists into dynamic skills architectures. By the end, you'll have a 6-week implementation plan you can start Monday.

What is role-based training?

Role-based training is learning designed specifically for the responsibilities, challenges, and outcomes of a particular job function. Unlike generic training programs that treat all employees the same, role-based training recognizes that a sales manager needs different skills than a customer success representative.

Historically, this meant creating courses aligned to job descriptions. Training followed static responsibilities. That model is collapsing.

Why traditional role-based training is breaking down

Emerging technologies are already reshaping roles across organizations. When roles themselves are unstable, training built on fixed job descriptions becomes obsolete within months.

The shift is from "train for a job" to "train for adaptability." Organizations are moving toward skills-based architectures where capabilities matter more than titles.

Four forces are driving this:

AI disruption: Tools that automate routine tasks are reshaping what humans actually do

Skills gaps: 63% of employers cite skills gaps as their biggest barrier to transformation

Retention pressures: Employees increasingly choose employers based on learning opportunities

Compensation evolution: Skills now command pay premiums that tenure never could

The answer is to redesign role-based training around transferable skills instead of static task lists.

The 2026 skills crisis: why role-based training matters more than ever

External hiring has failed to solve the skills shortage. Only 5% of organizations have the necessary skills and headcount to complete high-priority projects, according to Robert Half's 2026 research. The talent you need simply isn't available on the market.

90% of organizations cite learning opportunities as their #1 retention strategy, according to research from the University of New England. Not compensation. Not flexibility. Learning.

The math is simple:

Cost of replacing an employee: 50-200% of annual salary

Cost of comprehensive training program: 1-5% of annual salary

ROI of retention: 10-40x the training investment

Modern AI course creators like Foxtery lower training costs by removing the content bottleneck. Teams can generate and continuously update role-specific learning automatically.

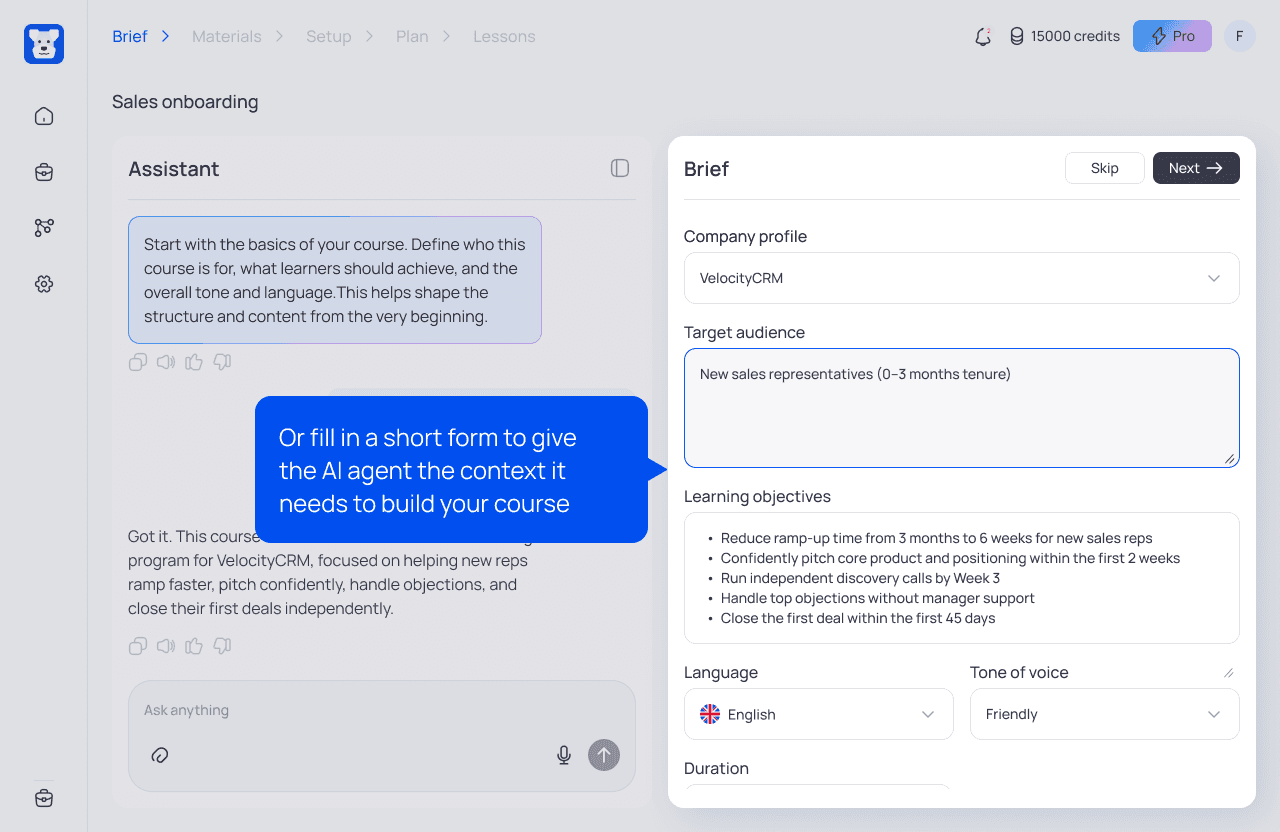

As shown in the screenshot below, when building a course you define the team and business goals first, and the AI agent structures the training around them automatically.

Employees expect development. When you don't provide it, they leave. But quantity of training isn't enough. The training must be relevant, timely, and tied to career progression.

The four pillars of modern role-based training

Effective role-based training in 2026 relies on four integrated pillars. Each one solves a specific weakness of traditional training programs. They work as a system, so applying only one or two will limit the overall impact.

Pillar 1: Skills architecture over job descriptions

Job descriptions become outdated within months. They describe responsibilities, not capabilities. They're backward-looking documents that reflect what someone did, not what they need to do next.

Skills architecture takes a different approach. Instead of tying learning to a fixed job description, it focuses on the core capabilities that drive performance across situations. When a role evolves, those capabilities still apply, even if responsibilities or titles change.

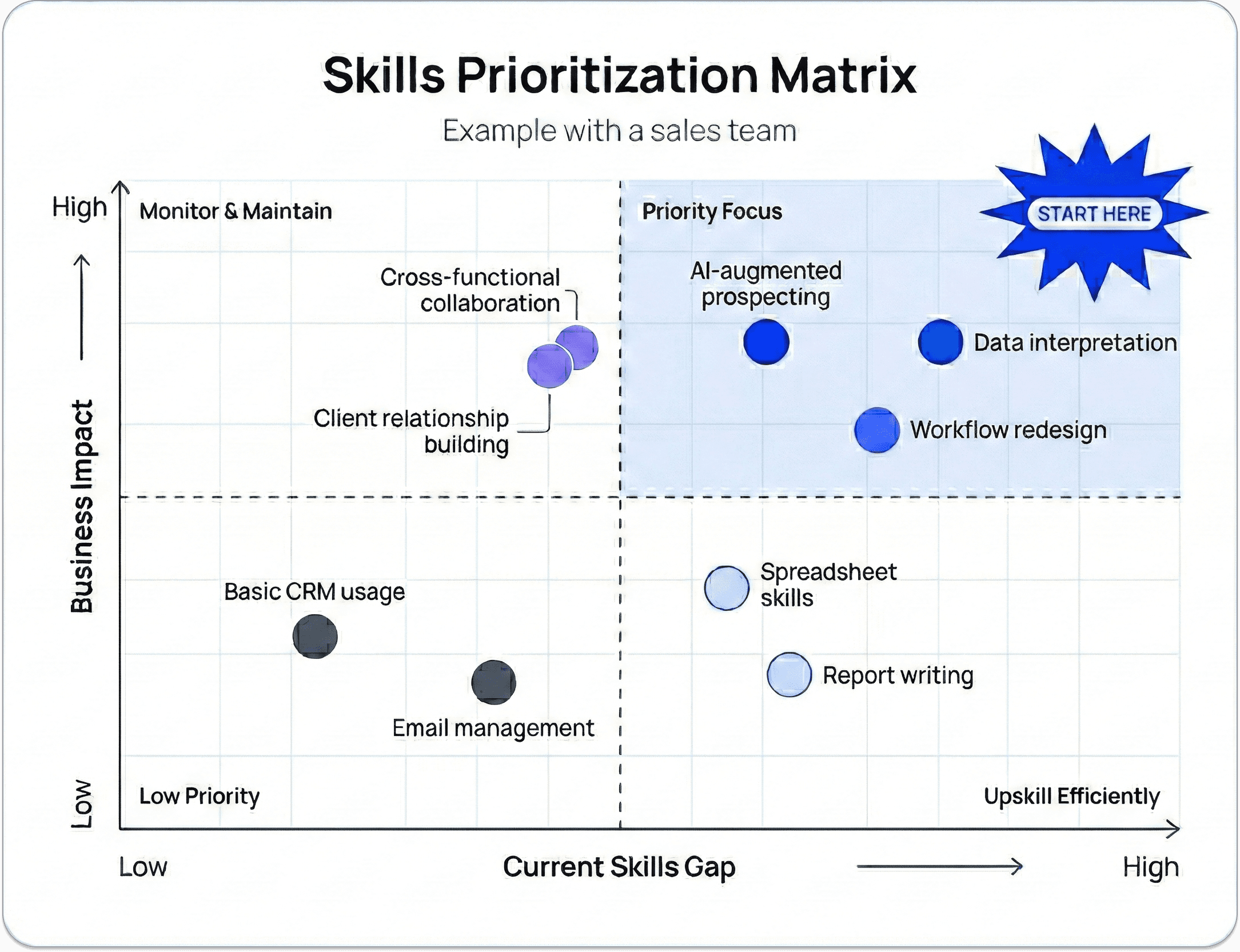

Consider the transformation of a "Sales Manager" role:

Old model: | New model: |

|---|---|

Build client relationships Close deals Manage pipeline | AI-augmented prospecting Data interpretation Workflow redesign |

The new model describes how work gets done, not just what gets done. These skills transfer when the role inevitably changes.

How to build your skills architecture:

Start with 2-3 critical roles facing the most disruption

Interview top performers to extract the skills they actually use

Categorize skills into technical, cognitive, and interpersonal buckets

Map each skill to specific business outcomes

Prioritize based on impact and current gap size

Pillar 2: AI governance and validation training

Most AI training teaches the wrong thing. Employees don't need another tutorial on writing ChatGPT prompts. They need to understand when AI outputs require human review, how to cross-reference AI-generated data, and how to redesign workflows around AI capabilities.

43% of organizations now use AI in HR, up from 26% a year ago. But the same Deloitte research reveals that advanced HR AI models hallucinate or generate inaccurate statements in about 3-7% of complex queries.

Three to seven percent might sound small. But when you're making hiring decisions or compensation adjustments, a 5% error rate is catastrophic.

What AI governance training actually includes:

Recognizing high-risk scenarios: Which AI outputs need human validation

Cross-referencing techniques: How to verify AI-generated information

Understanding model limitations: Why AI excels at patterns but struggles with novel situations

Workflow redesign: When to use AI, when to use human judgment, and how to combine both

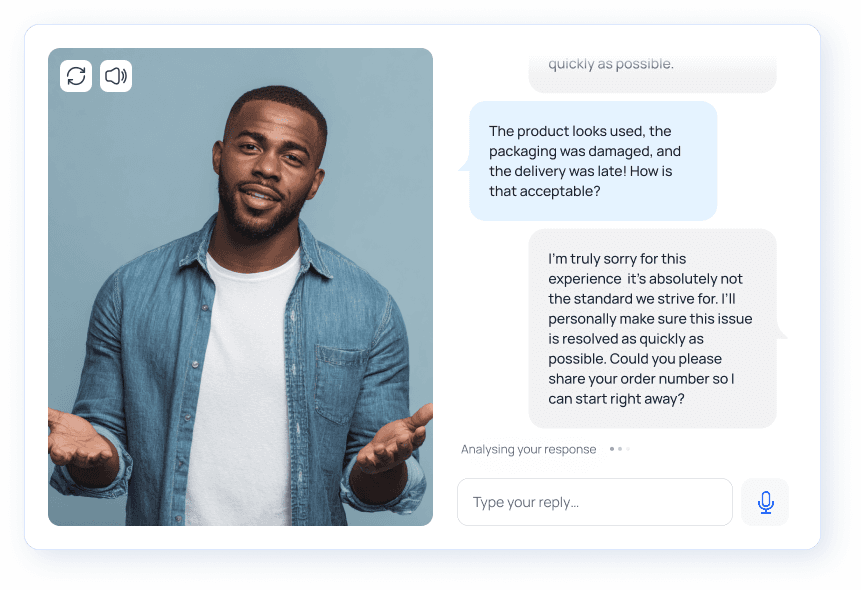

Example: Customer Success team validating AI responses

A customer success team uses AI to draft replies to technical support tickets. Before introducing governance training, reps often sent those responses as-is. After training, they follow a simple validation routine:

Check factual accuracy: cross-reference technical details with product documentation

Adjust tone and context: make sure the response matches the customer’s situation and urgency

Verify completeness: confirm the answer addresses the full question, not just the most obvious part

The AI speeds up the draft. The human ensures it is accurate, complete, and appropriate.

For more complex or high-risk cases, this validation skill can be strengthened through structured practice. Using a conversational simulator in Foxtery, Customer Success teams can train on realistic client scenarios that mirror real-world challenges.

With regular practice, reps get better at spotting issues and fixing them quickly. Over time, reviewing AI output becomes second nature, not an extra step they have to think about.

Pillar 3: Flow-of-work learning integration

Formal training hours are plummeting. Employees don't have time for hour-long courses. They're overwhelmed, multitasking during training sessions, and forgetting content within days.

Shorter courses alone will not fix the problem. Learning needs to be built into the flow of work, with guidance delivered right when someone needs it.

This represents a fundamental shift from courses to performance support. Instead of "complete this training module," it's "here's a 90-second video showing exactly how to handle this objection you're facing right now."

Flow-of-work learning formats:

Microlearning videos: 2-3 minute tutorials embedded directly in tools employees use

Searchable knowledge bases: Employees find answers themselves

AI-powered chatbots: Instant answers to procedural questions

Peer learning channels: Slack or Teams groups where employees share real-time solutions

Implementation example: Sales team objection handling

Instead of running a quarterly workshop, the team embeds objection training directly into their CRM.

When a rep logs a specific objection, the system:

Surfaces a 90-second video with a proven response

Suggests relevant response frameworks based on the deal

Links to real examples from successful past conversations

Provides access to a Slack channel for quick peer input on unusual cases

Guidance appears at the moment of need, so reps apply it immediately instead of trying to remember something from a workshop months ago.

Pillar 4: Skills-based compensation alignment

Employees prioritize training that increases their earning potential. When training has no connection to compensation, it competes with every other demand on someone's time. And it loses.

AI and ML skills command 30-40% pay premiums, according to Ernst & Young research. Skills-based compensation is already reshaping how employees think about career development.

This creates a powerful marketing opportunity for L&D. Instead of "Complete this training because it's required," you can say: "This training will make you eligible for [role/project/raise]."

How to align training with compensation:

Map skills to promotion criteria

Create skill badges or certifications

Publish skill progression paths

Connect training to project assignments

What if we don't have skills-based pay? Start small. Give employees who complete critical training first access to interesting projects. Feature skill achievements in performance reviews. Use skills as criteria for team lead selection. Make the connection explicit.

Building your role-based training program: a practical implementation plan

Here is a practical 6-week plan for L&D teams working with limited time and resources. Focus on getting it live quickly, then improve it as you go.

Phase 1: Role audit and skills mapping (Week 1-2)

Step 1: Identify 2-3 critical roles to start with. Choose roles that are:

- Facing the most disruption from AI

- Experiencing high turnover

- Critical to revenue or customer satisfaction

- Suffering from visible skills gaps

Step 2: Interview 3-5 top performers in each role. Ask:

- What skills do you actually use daily?

- What skills separate top performers from average ones?

- What skills will become more important in the next 12 months?

Step 3: Extract and categorize skills into:

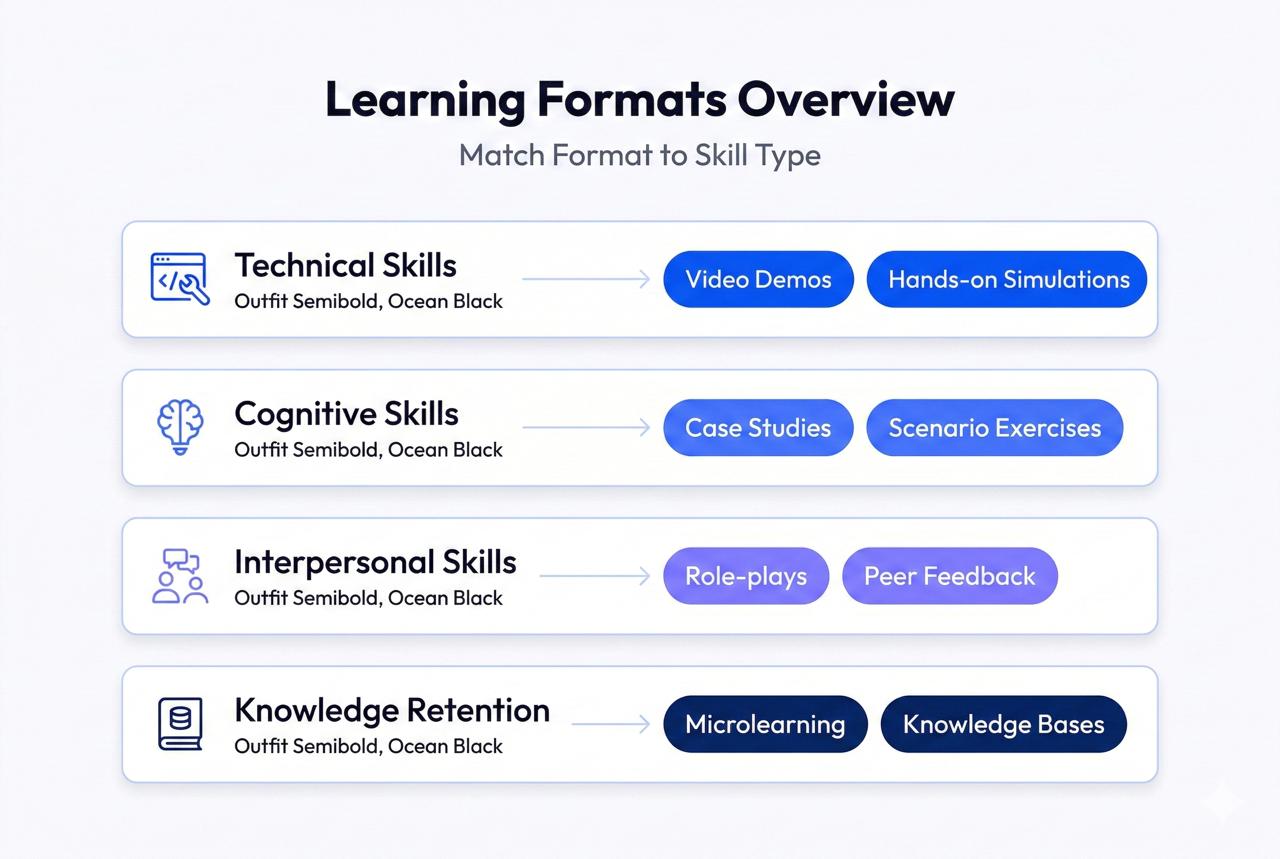

- Technical skills (tools, systems, domain knowledge)

- Cognitive skills (analysis, problem-solving, decision-making)

- Interpersonal skills (communication, collaboration, influence)

Step 4: Prioritize using a simple 2x2 matrix:

- X-axis: Current skills gap (low to high)

- Y-axis: Business impact (low to high)

- Focus on high-impact, high-gap skills first

Phase 2: Content strategy and modality selection (Week 3-4)

Not all skills need the same training format. Match content to skill type:

You don't need Hollywood production values. A 3-minute screen recording with clear audio beats a perfectly scripted video that takes three weeks to produce. Launch with "good enough" content and improve based on feedback.

Leveraging AI to accelerate content creation:

For faster course creation, use modern AI course builders like Foxtery. The entire process can be reduced to a few clear steps:

Set the context by defining the role, team goals, and business objectives

Upload relevant documents and materials

Review and approve suggested learning objectives, formats, and instructional methodologies

Approve the generated course plan

Receive ready-to-use lessons with interactive formats, structured into clear, digestible sections

If you have never used it before, start with this setup guide to see how to configure it correctly and launch your first course.

Phase 3: Launch and rapid iteration (Week 5-6)

Step 1: Pilot with 10-20 people in one role. Don't try to launch company-wide immediately.

Step 2: Set up feedback loops:

- Weekly check-ins with pilot participants

- Usage analytics (what content gets accessed, what gets skipped)

- Performance metrics (are trained employees performing better?)

- Direct questions: "What would make this more useful?"

Step 3: Iterate based on performance data, not just opinions. If employees say they love a module but never access it, trust the behavior data.

Step 4: Make changes weekly during the pilot. Don't wait for the "perfect" version.

Key metrics to track:

- Time-to-proficiency for new hires

- Performance improvements in trained vs. untrained employees

- Employee confidence scores

- Manager feedback on skill application

Once your pilot shows results, expand step by step.

Weeks 7 and 8: roll it out to the rest of the initial role.

Weeks 9 to 12: extend it to the next priority role.

From the beginning, plan regular updates. A training program should evolve continuously, not be treated as a finished project.

Common mistakes to avoid in role-based training

Even with a solid framework, L&D teams make predictable mistakes. Here are the four most common pitfalls:

Mistake #1: Training on tools instead of workflows

Teaching someone which buttons to click isn't training. It's documentation. Real training teaches the thinking process: When do I update this field? What information do I need before I can move to the next stage? Tools change constantly. Workflows are more durable.

Mistake #2: Creating training that ignores the time crunch

Employees are overwhelmed. Your beautifully designed 60-minute course competes with urgent customer issues, project deadlines, and 147 unread emails. It will lose. Design for 5-10 minute consumption. Make content accessible at the moment of need, not in a scheduled session.

Mistake #3: Building static programs in a dynamic environment

Training content has a shelf life of about six months in 2026. Tools update. Processes change. Best practices evolve. Yet most L&D teams build training as if it's permanent. Build update mechanisms from day one. Assign content owners. Set quarterly review cycles.

Mistake #4: Measuring activity instead of outcomes

Completion rates don't matter. Course enrollments don't matter. Hours of training delivered don't matter. What matters: Are trained employees performing better? Are they closing deals faster? Reducing errors? Staying longer? Tie training to business KPIs. Measure behavior change, not content consumption.

Measuring the impact of role-based training

You need both leading indicators (early signals that training is working) and lagging indicators (ultimate business outcomes).

Leading indicators:

- Skill acquisition rates (assessment scores, certification completion)

- Employee confidence scores (self-reported readiness)

- Content engagement (which resources get used, which get ignored)

- Manager observations (are they seeing behavior change?)

Lagging indicators:

- Performance metrics (sales numbers, customer satisfaction, error rates)

- Retention rates (are trained employees staying longer?)

- Promotion rates (are trained employees advancing faster?)

- Business outcomes (revenue, efficiency, quality improvements)

Build a simple measurement dashboard. A spreadsheet tracking 5-7 key metrics is enough to prove value.

Communicating ROI to leadership:

Executives don't care about training metrics. They care about business metrics. Translate your measurements into their language:

Instead of "85% completion rate," say "20% reduction in time-to-productivity"

Instead of "high engagement scores," say "15% improvement in customer satisfaction"

Instead of "positive feedback," say "$400K in retained revenue from reduced turnover"

Make the business case in terms they already track. Connect your training outcomes to their strategic priorities.

The real risk is standing still

Roles are already changing, and skills gaps are already affecting performance. The cost shows up in slower onboarding, missed revenue, and disengaged employees.

Modern role-based training is not about producing more courses. It is about building a system that evolves with your business.

Start with one critical role. Define the skills that drive results. Launch a focused pilot and improve it continuously.

If speed has been the blocker, tools like Foxtery remove the content bottleneck, allowing you to launch and update training in hours instead of months.

The companies that treat learning as a living system will move faster than those that treat it as a one-time project.